How AI-driven feedback loops could make things very crazy, very fast

A primer on the intelligence explosion

When people picture artificial general intelligence (AGI), I think they often imagine an even smarter version of ChatGPT. But that’s not where we’re headed.

The frontier AI companies are trying to build a fully fledged ‘digital worker’ that can go and complete open-ended tasks like building a company, overseeing scientific experiments, or controlling military hardware. If they succeed, it would create totally different dynamics from existing LLMs, and have much wilder consequences.

The reason is the effect of feedback loops that could accelerate the pace of societal change by 10 or even 100 times.

The feedback loop that’s received the most attention in the past is the one in algorithmic progress. If AI could learn to improve itself, the argument goes, maybe it could start a singularity that leads rapidly to superintelligence.

But there are other feedback loops that could still make things very crazy — even without superintelligence — it’s just that they may take five to 20 years rather than a few months. The case for an acceleration is more robust than most people realise.

This article will outline three ways a true AI worker could transform the world, and the three feedback loops that produce these transformations, summarising research from the last five years.

While the first concern most people have about AGI is mass unemployment, things could get a lot weirder than that, even before mass unemployment becomes possible. What’s at stake is an entirely new economic order and pace of change, with major implications for the best ways to do good, no matter what issues you’re focused on today.

Throughout, I don’t try to assess whether or when this sort of digital worker will be ready to deploy, but rather assume capabilities will continue to advance, and explore what happens next.

1. The intelligence explosion

Algorithmic feedback loops

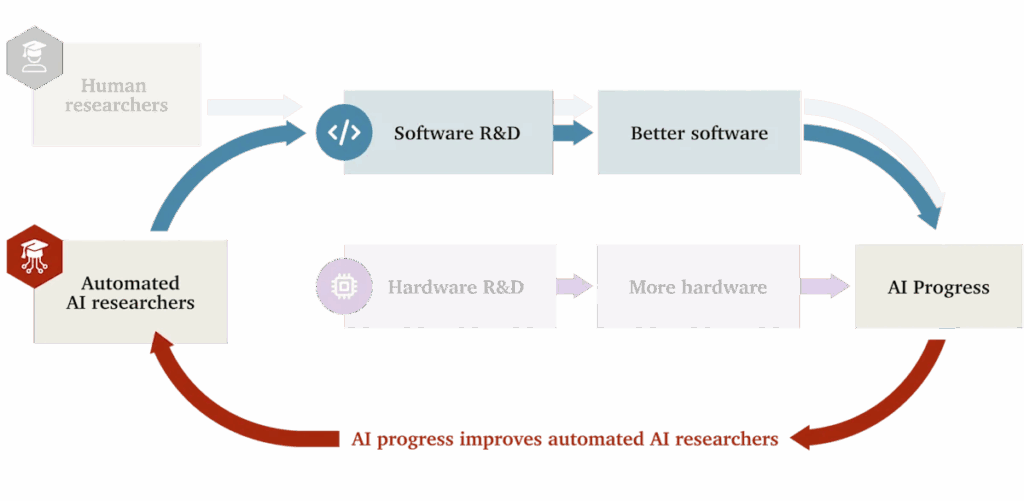

In the 1950s and 60s, Alan Turing and I. J. Good saw that if AI began to help with AI research itself, then progress in AI research would speed up, which would lead to AI becoming even more advanced, perhaps producing a ‘singularity’ in intelligence.1 Back then this was a purely theoretical argument, but in the last five years we’ve gained much more empirical grounding for how this (and other) feedback loops could work.

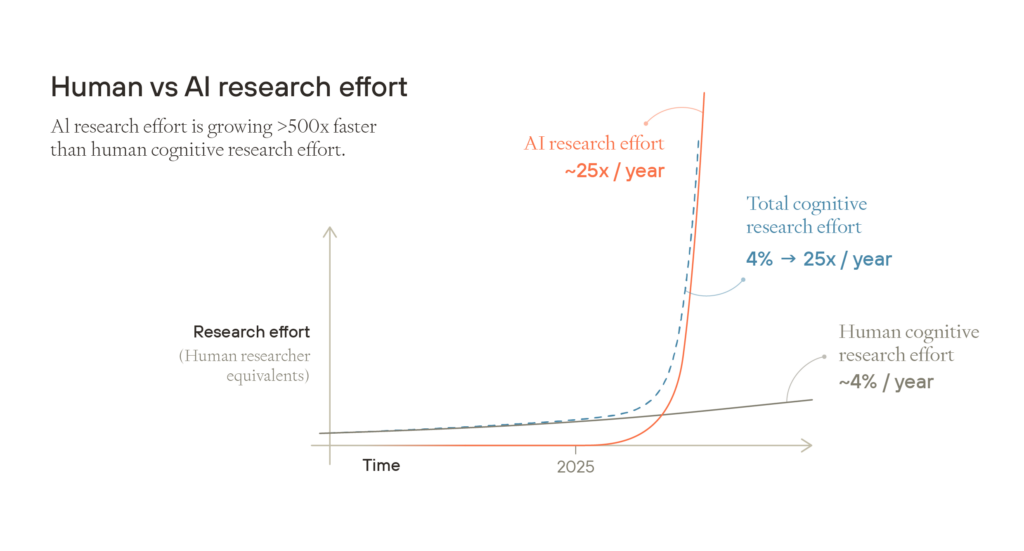

The leading AI companies today already use AI extensively to aid their own research, especially to help with coding training, tests, and experiment scaffolding.2 So far, the overall boost to the productivity of these researchers seems still relatively small, perhaps 3–30%.3 But as AI tools improve, the boost to their productivity will increase.

Now imagine that the process continues and the models keep getting better. Eventually, they become able to do the job of a junior engineer, and then a mid-level engineer, and continue to improve from there.4

If current models could produce work comparable to that of a mid-level engineer, then given the amount of computing power already available in datacentres today, it would be possible to produce output equivalent to millions of competent engineers working on AI research.5 There’s probably under 10,000 human researchers working on frontier AI today, so this would be similar to each human researcher having the equivalent of 100 assistants.

Next, imagine that AI continues to improve, and eventually these models start to do the work of even top researchers, with minimal human direction.

No one knows exactly how much that would speed up progress, but much comes down to a single question:

If you double the amount of research effort going into AI algorithms (holding the number of chips constant), do the algorithms at least double in quality?

If the answer is yes, then each time the number of digital AI researchers doubles, it unlocks advances that allow you to run AIs that are twice as effective, which then allows the population of digital researchers to double again, and so on, until you approach some other limit.

There are empirical estimates of the returns of past algorithmic research suggesting that, while the value could be below one, there’s a good chance it’s greater — which would start a positive feedback loop.

The next question is how quickly the feedback loop fizzles out as it runs into other constraints. The most complete model of both effects I’ve seen is by Tom Davidson, who currently works at Forethought, an Oxford-based research institute founded to study the impact of AI. In March 2025, Tom estimated we’d most likely see three years of AI progress condensed into one year, and it’s possible we’d see as many as 10.6

What would three years of progress in one year look like? As algorithms have become more efficient, the number of AI models you can run on a given number of computer chips has increased by more than a factor of three per year over the past five years.7 So if you were to start with 10 million digital workers, seeing three years of progress condensed into one would mean that one year later, you could run about 270 million of them.

These models would also be smarter. Three years of progress is more than the gap between the original GPT-4, which sucked at math, science, and coding, and GPT-5, which can answer known scientific questions better than PhD students in the field and won gold at the Maths Olympiad.8 Once AI gets close to being able to do AI research, we could see this kind of leap in under a year, starting from a point where the models are already around human level.

Early discussions were concerned with whether it could happen literally overnight (‘foom’), but today few people think that’s plausible. It still takes time to run experiments and do training runs. But it could unfold on a scale of months, arriving in a world that looks otherwise similar to today and creating massive disruption — and the process won’t stop there.

Hardware feedback loops

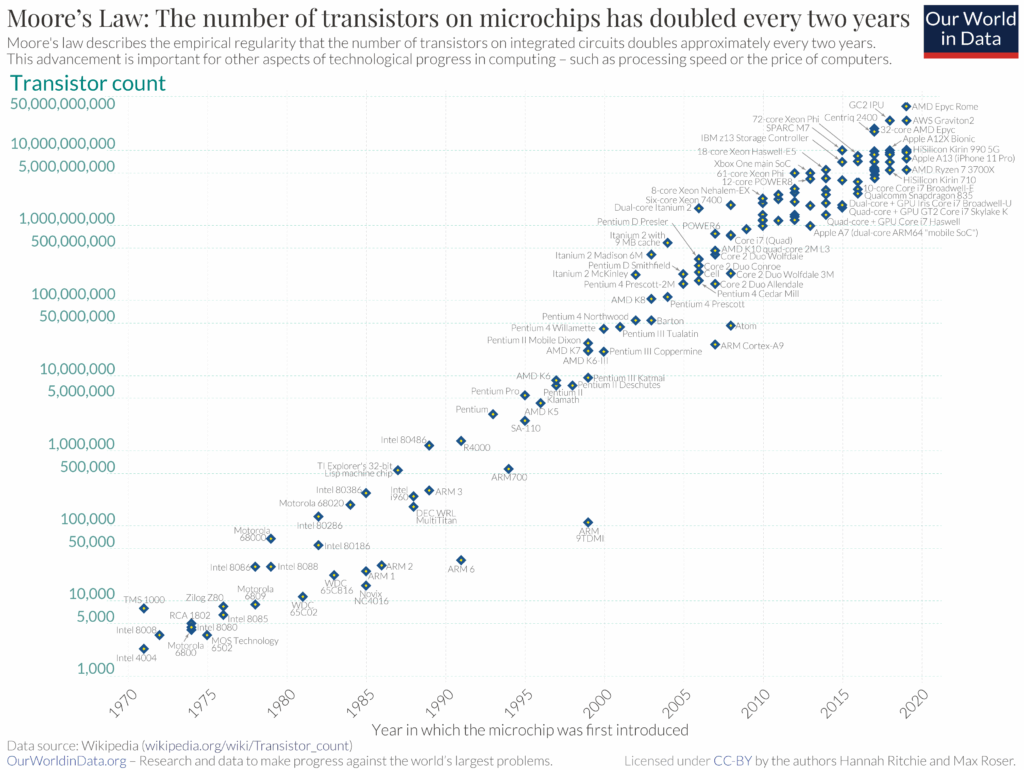

Today, the number of AI chips produced is doubling roughly every year.9 If that trend continues, and you can run 270 million AIs in one year, then you’d be able to run about 540 million the next. There would also be twice as much computing power available for AI training, so they’d become smarter too.

If each chip costs about $2 per hour to run, but can do the work of a human knowledge worker, those chips could generate $20 or even $200 of revenue per hour. Chip production would become one of the world’s biggest priorities, seeing not hundreds of billions, but trillions of dollars of investment. AI companies would direct the hundreds of millions of AI workers at their disposal to the task of accelerating chip production as much as possible, so it’s likely chip production would accelerate too.10

More chips would generate even more revenue, which would pay for even more chips, which would make AI even better. This is the chip hardware-driven feedback loop, and it has stronger evidence behind it than the algorithmic one:11

This feedback loop is likely to work because each time total computing power doubles, there’s twice as much available for both inference and training.12 Twice as much inference compute means you can run twice as many models, which naively means they should be able to earn (almost) twice as much revenue. On top of that, twice as much training compute means those models will be smarter and more efficient, making them more useful, meaning revenue will likely increase even more.

In fact, this seems to be what’s already happening. Each year, frontier AI companies increase the amount of computing power at their disposal by about 3–4 times — but their revenues have been increasing by about 4–5 times per year.13

Moreover, each time investment into chips has doubled, the amount of available computing power has increased much more than that. From 1971 to 2011, investment in semiconductors increased by 18 times, but the amount of computing power in a chip increased one million times due to innovation and economies of scale. The paper “Are ideas getting harder to find” shows that doubling investment into computer chips has led to a five times increase in computing power.14

These two effects compound: each time AI companies double their revenue, they can reinvest in chips that will give them more than twice as much computing power in the next generation. Then each time computing power doubles, it can be used to run more than twice as many better-quality digital workers, who can earn more than twice as much revenue. (At least until other limits are hit, which I’ll discuss later.)

Where could this end up?

Whether it’s via the algorithmic or hardware feedback loop, we could quite quickly end up in a world with many billions of AI workers that can be hired for tens of cents per hour. It’s possible that these AIs quickly reach what’s been called artificial ‘superintelligence’ (ASI): AI that’s more capable than humans at basically every cognitive task. This is no longer just an idea, but rather is the explicit goal of the leading AI companies who’ve raised hundreds of billions of dollars in pursuit of it.15

Superintelligence could mean AIs that are capable of much greater insights than humans. But it could also mean AIs that are about equally smart, but outstrip us due to other advantages. Picture the most capable human you know, then imagine they could crank up their processing speed to think sixty times more quickly — a minute for you would be like an hour to them. Now imagine they could make copies of themselves instantly, and that everything one copy learned could be shared with the others. Imagine a firm like Google, but where the CEO can personally oversee every worker, and every worker is a copy of whoever is best at that role.

Whether we end up with superintelligence or a vast number of better-coordinated human-level digital workers, this process has been called the ‘intelligence explosion.’ It’s maybe more accurate to call it a ‘capabilities explosion,’ because AI wouldn’t only improve in terms of narrow bookish intelligence, but also in creativity, coordination, charisma, common sense, and any other learnable ability.

Experts in the technology believe there’s a 40–60% chance the intelligence explosion argument is broadly correct, and a 10% chance AI becomes vastly more capable than humans within two years after AGI is created16 — though this seems low to me.

2. The technological explosion

What would happen after an intelligence explosion has started? There are about 10 million scientists in the world today.17 If these hundreds of millions of AIs became as productive as human scientists, then the effective number of researchers would increase by 100-fold (and keep growing). Even though there are many other bottlenecks to science besides the number of scientists, this would almost certainly speed up the rate of technological progress. Forethought have also estimated that we could see 100 years of technological progress in under 10, and maybe a lot more.18 We could call this the ‘technological explosion.’19

To get a sense of how wild this would be, imagine for a moment that everything discovered in the 20th century was instead discovered between 1900 and 1910. Quantum physics and DNA sequencing, computers and the internet, penicillin and genetic engineering, jet aircraft and space satellites would all happen within just two or three election cycles.

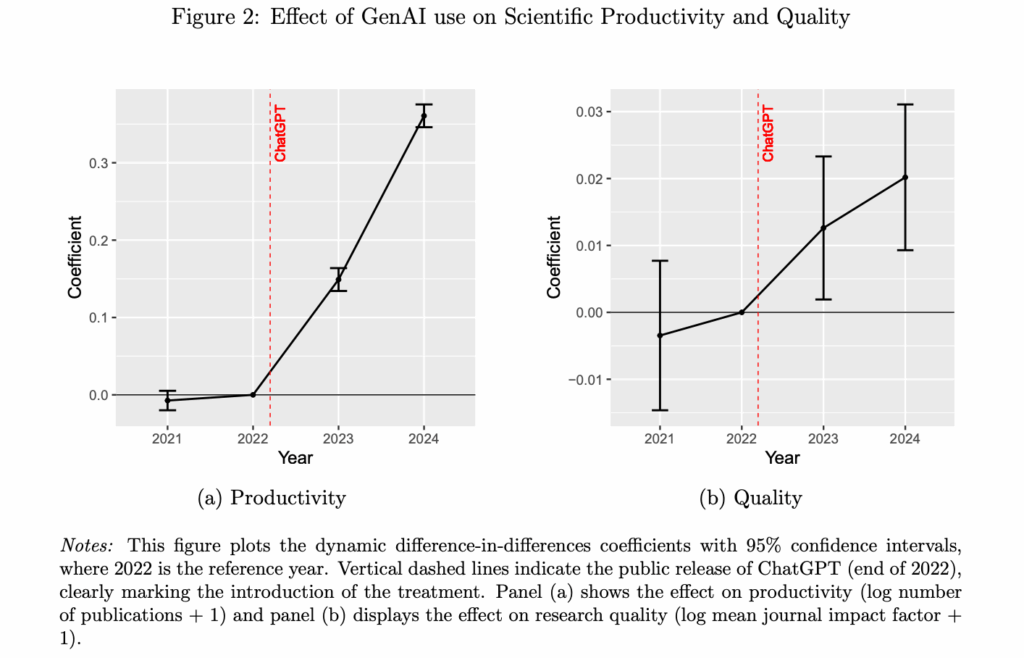

Initially this could look like specialist AI tools, like AlphaFold, which solved the protein folding problem and earned its creators the Nobel Prize. More recently, a paper found that scientists using AI were producing about 30% more papers in 2024 compared to similar scientists who weren’t, and these papers were, if anything, higher quality.20

Eventually, it could look like AI models that can answer questions humans don’t yet know how to answer, or run huge numbers of automated experiments and effectively do work that would have taken hundreds of human scientists (or been impossible) before. The CEO of Anthropic sketched how this might look for biomedical research in his AI-optimism manifesto “Machines of loving grace.”

Much intellectual work, like maths or philosophy, could proceed virtually, so unfold very fast. However, what these digital scientists could do would quickly become limited by their inability to interact with the physical world. Robotics would then become the world’s most profitable activity. This leads us onto…

3. The industrial explosion

Robotic worker feedback loops

Soon after the outbreak of World War II, American car factories were converted to produce military planes. Today, car factories produce about 90 million cars per year,21 and if they were converted to produce robots, it’s possible they could produce 100 million to one billion human-sized robots per year.22

Without robots, the intelligence explosion fizzles out at the point where disembodied intelligence is no longer useful. Maybe everyone already has 100 PhDs checking every tiny decision. The revenue an additional AI chip can earn would drop below the cost of producing one.

However, AI combined with advanced robotics can potentially do almost every economically important task, including building the factories, solar panels, and chip fabs needed to produce more robotic workers.

This means if a bunch of robotic workers can do some work and earn some money, then that can be used to construct more robotic workers. That larger group of robotic workers can then earn even more revenue, which can be used to construct even more robots, and so on. What effect would this have?

Epoch AI is one of the leading research groups at the intersection of AI and economics, and have created some of the only models that explore what a true human-level robotic worker would mean for the economy. They show, for instance, that if it becomes possible to produce a general-purpose robot for under $10,000, and you plug that into a standard economic growth model, the total quantity of goods and services produced would start to grow 30% per year.23 This has been called the “industrial explosion.”

It happens for the simple reason that if you have twice as many workers, and twice as many tools and factories, then they can produce about twice as many outputs. This is a widely accepted idea in economics with empirical support, called ‘constant returns to scale.’24

This doesn’t happen in the current economy because if output doubles, while that can be reinvested into the capital stock, it can’t be reinvested to increase the number of workers.

Giving the same number of workers a factory that’s twice as big doesn’t mean they can produce twice as much, so output as a whole doesn’t grow that much. But when it’s possible to simply build a new robotic worker, that constraint no longer applies. This leads to growth in output that is still exponential like today, but much faster.

If the AI workers can also contribute to innovation, then as the population of AIs grows, the amount of innovation they can do also increases, which means each AI worker gets more powerful technological tools, which increases their output even further (arguably this is a fourth ‘productivity’ feedback loop that results from the technological explosion). In this scenario, output accelerates over time, growing superexponentially.25

While an algorithmic feedback loop would likely peter out quite quickly as diminishing returns to algorithmic research are reached, the industrial explosion can keep accelerating until physical limits are reached. These could be very high.

As one illustration, Forethought argue that robot production would more likely be constrained by energy shortages than a lack of raw materials. If 5% of solar energy were used to run robots at around the efficiency of the human body, that would be enough to run a population of 100 trillion(!)26. And this ignores expansion into space.

The speed of an industrial explosion is ultimately limited by the minimum time in which it’s possible to build an entire production loop of solar panels, chip fabs, and robots. No one knows how fast that could be, but there are biological organisms, like fruit flies, that can replicate a brain and miniature ‘robot’ in about a week, so it could eventually become very fast.

A few common counterarguments

It’s also possible there’s enough tasks robots remain unable (or are not allowed) to do that an industrial explosion never gets started (despite the insanely large financial and military incentives to do so).

Financial markets don’t currently seem to predict any increase in economic growth, and economists remain skeptical of the possibility.

But when most economists try to model the effects of AI, they implicitly assume it remains a complementary tool to human workers. If you model the effect of a robot that can actually substitute for human workers, it’s pretty hard not to get explosive growth. Most of the arguments against explosive growth are just arguments that sufficiently autonomous robotic workers won’t be possible, not that explosive growth won’t follow if they are.

Another common response is that mass automation would make everyone unemployed, which would crash demand. But the initial stages would produce a boom in wages, as tasks that can’t yet be done by AI (including many blue collar jobs) become crucial bottlenecks and see increasing wages. In addition, more than half of Americans have a net worth over $100,000, and they would quickly become multimillionaires. Then about 25% of GDP is taxed, and most of that is redistributed as welfare. These forces would sustain demand even if employment drops.

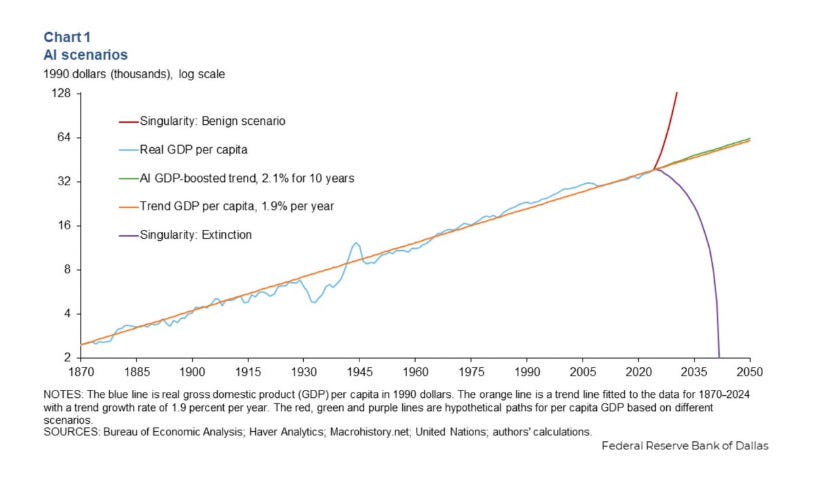

More and more economists are starting to take the possibility of explosive growth seriously, even if they haven’t truly internalised the implications, as in this this report on how “AI will boost living standards” by the Dallas FED:

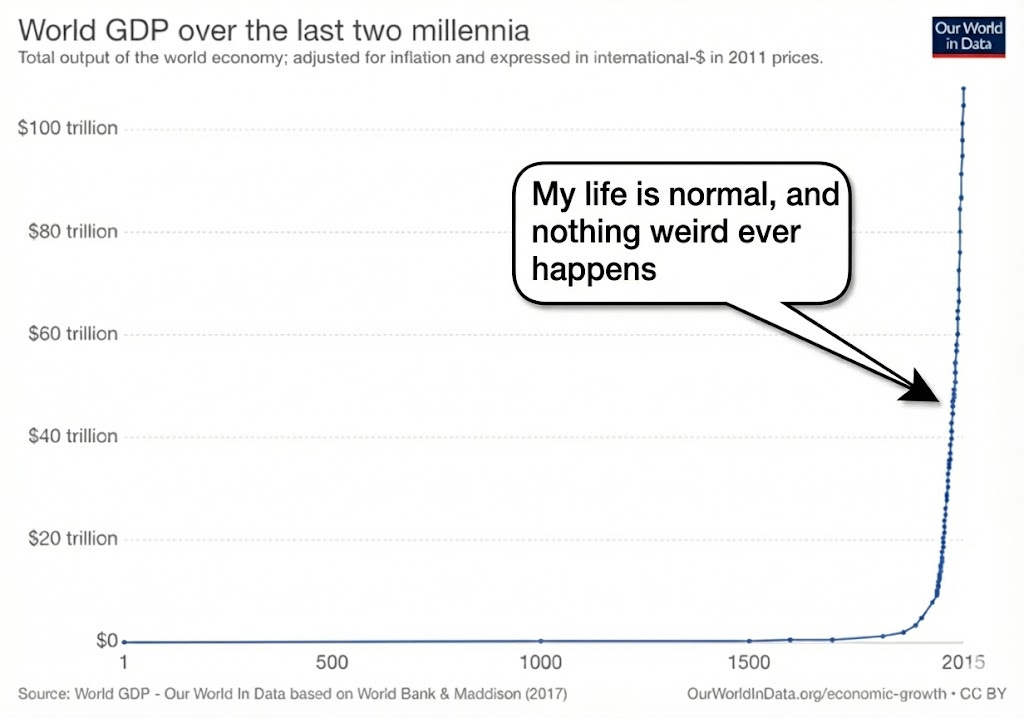

Another common objection is that these scenarios seem crazy and outside of the historical norm. But keep in mind that an economic acceleration has already been happening over the last few thousand years. Before the agricultural era, there was virtually no economic growth. After that, growth increased to perhaps 0.1% per year. During the industrial revolution, it accelerated again to over 1% per year.

The rate of growth has been steady over the last 100 years, but that’s because the population stopped growing in line with the size of the economy. AI and robots would resume the old dynamic in which more output leads to a larger ‘population,’ and that dynamic leads to superexponential growth.

Two views of the future of advanced AI

It’s possible that AI won’t be able to carry out algorithmic research, scientific research, or many ordinary jobs any time soon. If additional investments in computing power stop increasing AI capabilities, or revenues aren’t high enough, then AI capabilities will gradually plateau.27

Perhaps AI will end up extremely capable in some narrow dimensions, like mathematics and coding, but there will remain so much it can’t do that the economy carries on as before.28 This is what happens with most technologies, even ‘revolutionary’ ones. Electric lights were a big deal, but once we all have them, we don’t buy ever more of them in a self-sustaining loop. The purpose of this article, however, is to explore what will happen if AI capabilities don’t plateau. Among people who’ve thought most about this question, views tend to divide into two main camps:

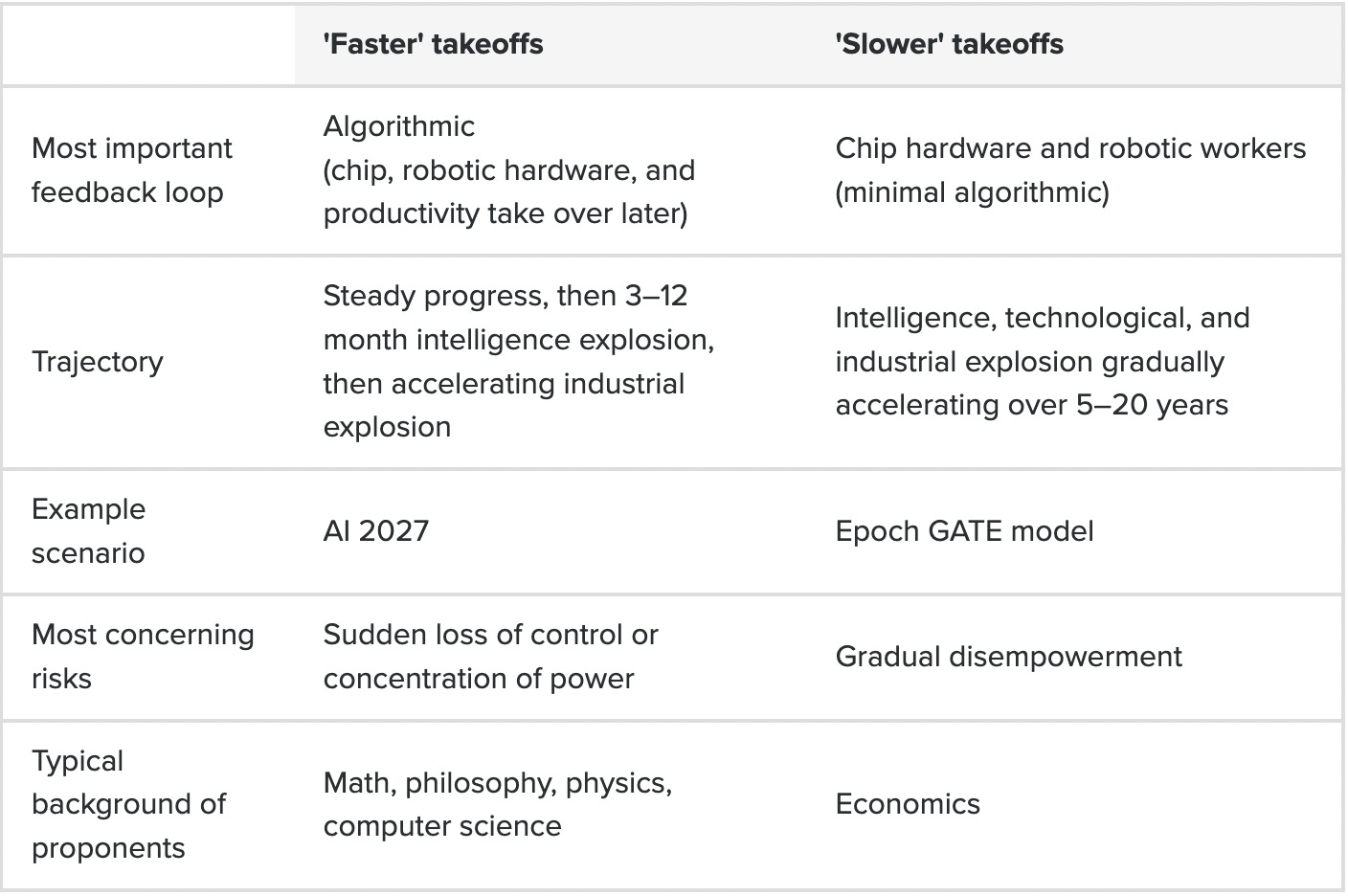

The first camp is most concerned about the algorithmic feedback loop. Maybe AI remains a long way from being able to do most jobs, but it turns out to be especially good at two things: coding and AI research. These are purely virtual tasks, with relatively measurable outcomes that match the current strengths of the models.

While daily life continues to look basically the same as before, somewhere in a datacentre, 10 million digital AI researchers are taking part in a self-sustaining algorithmic feedback loop. Less than a year later, there’s 300 million smarter-than-human AIs — a “country of geniuses in a datacentre”28 — now deployed to max out chip production, robotics production, scientific research, and then automation of the economy. These digital workers could drop into existing jobs, and so diffuse far faster than previous technologies.

This scenario is extremely important to prepare for, because it’s the most dramatic and dangerous. We could go from the normal world to one with superintelligent AIs in just a year or two. A single company could end up with 10 times or 100 times the intellectual firepower of the entire scientific community today. And this could happen in a world that looks pretty similar to today, before there is significant technological unemployment.

This is the kind of scenario explored in Situational Awareness or AI 2027, which looks at what would happen if an automated coder were created in 2027. I don’t think an automated coder will be created in 2027, but it’s very possible it’s invented within the next 10 years, and on balance, I think an algorithmic feedback loop is more likely than not (though I’m unsure how far it will go).

A scenario that seems quite likely to me now is one where AI progress continues and perhaps gradually slows after 2028, as it becomes harder and harder to scale up computing power. AI capabilities remain very jagged and unable to do the long-horizon planning, strategy, or continual learning that would make it autonomous, but are useful enough to generate substantial revenue and scientific breakthroughs, which drives continued investment. Then at some point in the 2030s, the final bottlenecks are overcome (or a new paradigm is created) and an algorithmic feedback loop starts, initiating a faster takeoff later in the decade.

Unlike AI 2027, this scenario anticipates a longer gap between things starting to get obviously crazy and a full intelligence explosion. This means society will have more time to prepare, but it also means the takeoff might happen in a world with more intense conflict and more robotic infrastructure already in place.

The second, slower takeoff camp thinks an algorithmic feedback loop isn’t possible, but they still think the intelligence, technological, and industrial explosions will happen. The difference is these explosions would need to be driven by the chip hardware, robotic worker, and productivity feedback loops instead.

This is the kind of scenario explored in Epoch’s GATE model — the first attempt to make an integrated macroeconomic model of AI automation. It starts at the point where an AI is created that can do 10% of economically important tasks, and models how reinvestment into computer hardware could drive revenue and automation ever higher.

Given their default assumptions, within five years, total GDP has doubled and the growth rate has reached 20%, and from there continues to accelerate. After 15 years, GDP is 30 times larger, there’s 500 billion AI workers, and growth has reached 50% per year. Even if you add additional frictions, things still get pretty crazy pretty fast.

What’s clear is that — faster, slower, or somewhere in between — society isn’t remotely prepared for any of these scenarios.

As a result, we could see a dramatic expansion in wealth and technology, which would make it far easier to tackle many global problems. But it would also pose novel and truly existential risks. What are they?

As a result, we could see a dramatic expansion in wealth and technology, which would make it far easier to tackle many global problems. But it would also pose novel, and truly existential risks. Which are they? Read this.

"...In the 1960s, Alan Turing and I. J. Good saw that if AI began to help with AI research itself, then progress in AI research would speed up..."

You of course mean:

"...In the 1960s, *Alan Turing's Philosophical Zombie* and I. J. Good saw that if AI began to help with AI research itself, then progress in AI research would speed up..."

Could there be a robotics bottleneck? Like a situation where disembodied intelligence is extremely advanced but where the relatively slow progress of robotics (either occuring naturally or artificially made slow through regulation) limits the ability of AI to take over the most embodied kinds of human jobs, like plumbing, piano playing, or charisma-based jobs (e.g. politics)?

It seems to me that a lot of embodied knowledge is not currently stored in an AI readable format like a textbook. So to reach AI doing 100% of human tasks we would first need a human-like or superhuman robot (which seems like a huge task requiring a lot of cooperation between human experts and requires quite a lot of material procurement which, again, AI can't get on its own) and then we would need to train it in order to reach proficiency in that discipline. Two steps that in my opinion could be much more easily slowed down with regulation than software progress... Perhaps an avenue to consider for AI safety?

Separately, I was wondering how much effort is being done right now in producing machines or technologies that detect data-centre activity, even when hidden deep underground. Such a technology might be useful if we need to urgently pull the plug on AI development whilst it's still disembodied...