Do we already have AGI?

What AGI means, and why we don’t have it yet.

More and more people are saying Claude Code and GPT 5.3 are already AGI. Are they right?

Short answer: no.

Long answer: on the most prominent definitions, current AI is superhuman in some cognitive tasks but still worse than almost all humans at others. That makes it impressively general, but not yet AGI.

What is AGI?

Only 70% of people at the biggest AI conference seemed to know what ‘AGI’ stands for, and it’s only 10% among the public.

‘AGI’ stands for artificial general intelligence. It was introduced around 2007 by Ben Goertzel as a contrast to ‘narrow’ AI – one that can only do a small range of tasks, like play chess.

A general AI is able to do a wide range of tasks, in the same way humans can learn to catch a ball, do maths, and sell hot cakes all in a single package.

It was made more precise in a 2007 paper by Marcus Hutter and Shane Legg (the co-founder of DeepMind, which pioneered the recent wave of AI), who defined it as “an agent’s ability to achieve goals in a wide range of environments”.

Legg and collaborators at Google DeepMind further operationalised this definition in a 2023 paper, “Levels of AGI”. Imagine a list of all the possible tasks an AI can do, then consider two dimensions:

Generality: how many tasks can it do?

Capability: how well can it do each task?

An AI can be narrow and weakly capable (like a chess-playing AI that sucks); it can be narrow and strong (like IBM’s Deep Blue); general and weak (perhaps like GPT-2); or general and strong.

Both of these scales are continuous – ultimately it’s arbitrary when an AI becomes general enough to be called an ‘AGI’.

However, a natural spot to draw the line is at the human level: if an AI can complete a wider range of tasks to a similar or greater ability compared to humans, then it’s an AGI.

This is what most definitions do. Geoffrey Hinton, Turing Award winner and ‘godfather of AI’ defined AGI as AI that is “at least as good as humans at nearly all of the cognitive things that humans do,” as does Wikipedia.

But we still face some choices. Which humans are we talking about? People point out Claude and GPT can already do things most humans can’t (like win gold in the maths Olympiad), but being able to beat randomly selected humans isn’t very interesting. We don’t hire randomly selected humans to do most jobs; we hire humans who are specialised in them.

The DeepMind paper draws the comparison to ‘skilled’ humans, and then defines different levels of AGI based on when it can beat 50th percentile skilled humans (‘competent AGI’), 90th percentile (‘expert AGI’), and all humans (‘superintelligent AI’).

What tasks are counted? An AGI should be able to do “a wide range of non-physical tasks, including metacognitive ones”. Metacognitive skills are those that involve thinking about one’s own thinking, such as planning and self-evaluation.

So on this definition, where do we stand today?

Do we already have AGI?

The paper (even in its 2025 update) says we’ve reached ‘emerging AGI’ but not ‘competent AGI’. Demis Hassabis, the cofounder and CEO of DeepMind said in early 2026 he thinks AGI “could arrive in 5 years”, implying it’s not here yet. Why?

The current systems are already superhuman at the ability to read text and recall information (i.e. they know more languages than any human).

They are expert-level at the ability to complete several-hour long coding tasks and answer mathematical and scientific questions with known answers. They are also increasingly able to do other knowledge work tasks that take under a day.

However, they are still worse than almost all humans at:

Managing anything that takes more than a couple of days to finish, like organising a contractor to decorate your bathroom.

Visual manipulation and navigation: they still often fail at simple web navigation and can’t pilot a drone.

Adversarial social interactions, such as managing a vending machine when someone is trying to scam them.

Many metacognitive skills such as learning from experience longer than their ~1 week context length, or understanding how confident they are in a statement.

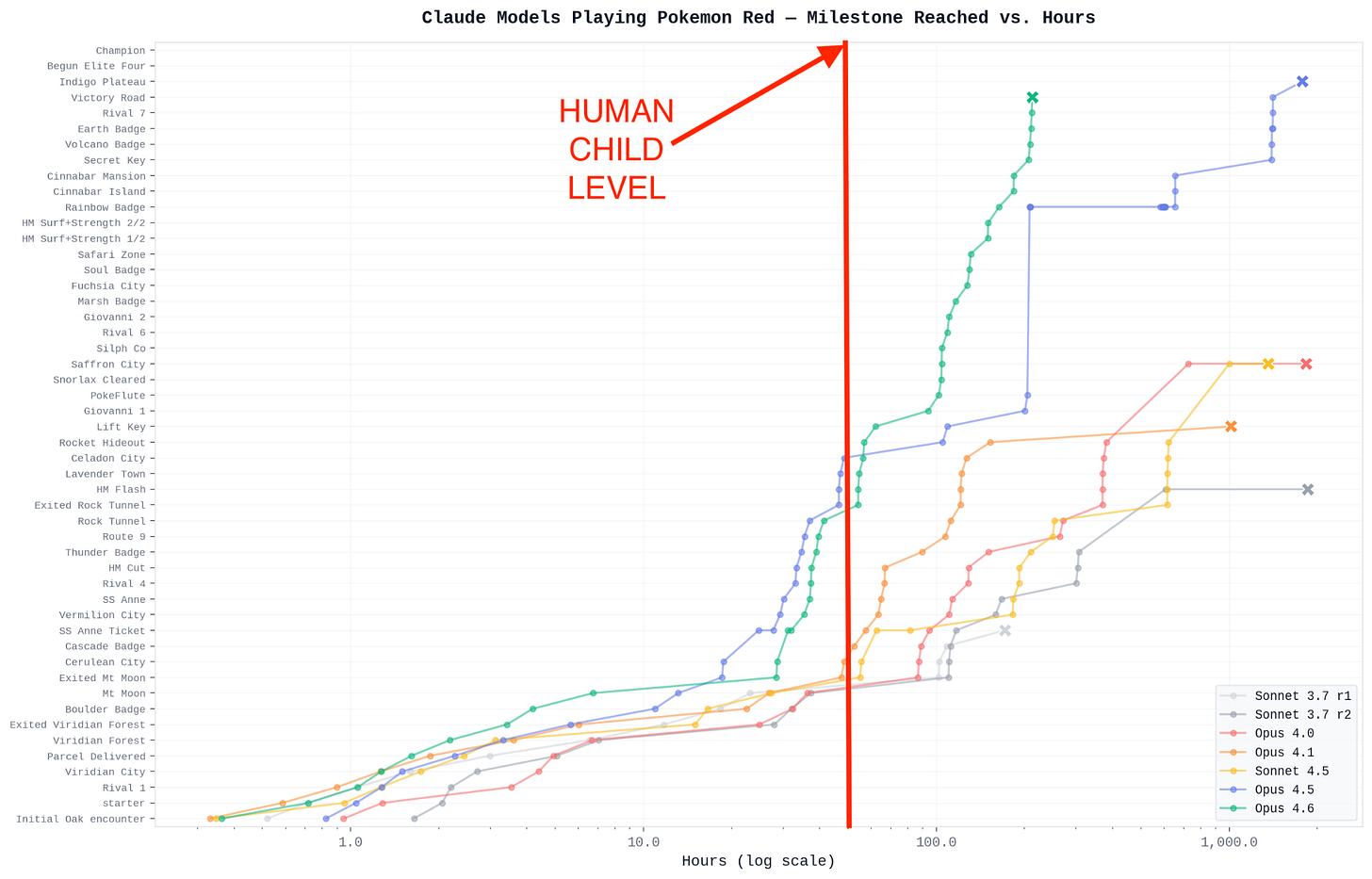

Frontier models can’t even beat children at Pokemon – a multiday, agentic task, but one that’s still much easier and more neatly defined than most white collar jobs.

(Not to mention physical capabilities like making a sandwich.)

They’re also still weaker than human experts at some especially important cognitive skills, like doing novel research or leading a company.

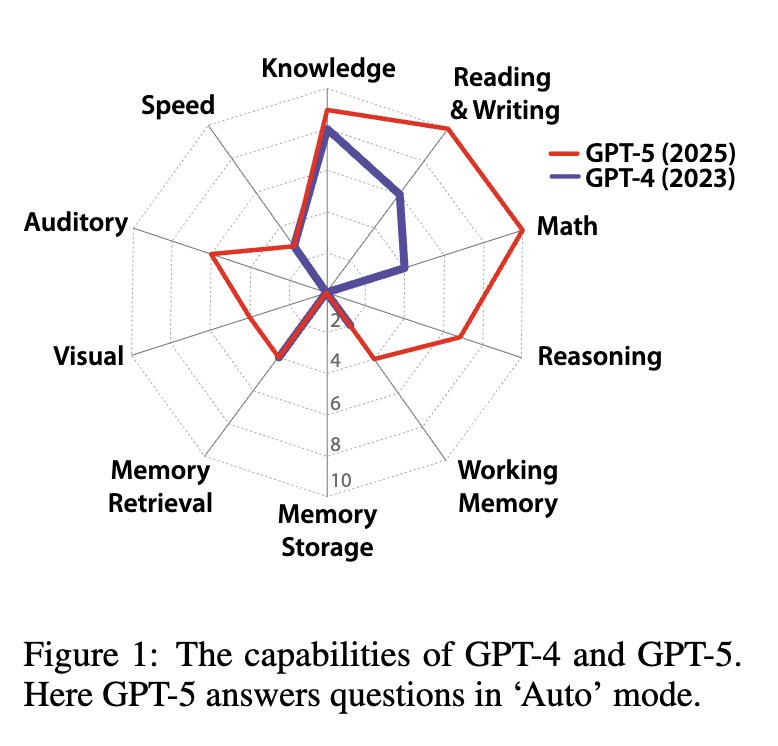

In 2025, Yoshua Bengio, Turing Award winner and one of the most cited AI scientists, along with 20+ other prominent people, built on the 2023 DeepMind paper in a new paper, “A definition of AGI”. Rather than vaguely saying an AGI needs to be able to do a “a wide range” of tasks, it made a list of 10 key cognitive capabilities, and compared AI to human performance on them.

A score of 100% represents the human level, and GPT-5 scored 57%. In particular, it scored near human level on knowledge, reading, writing and maths, but was way below on speed, memory, visual, and auditory processing.

GPT-5.4 in an agentic harness will be better (especially for memory) but I highly doubt it would reach 100% on dimensions. (I’d also guess reaching 100% won’t actually be sufficient for AGI, because there will be missing abilities that are hard to create a benchmark for.)

The third main type of definition is economic. OpenAI defines AGI as “highly autonomous systems that outperform humans at most economically valuable work”. To reach this definition, it needs to be the case that for almost any job, you’d prefer to hire an AI over a human. This is clearly not reached.

A common response is that the ‘raw’ intelligence is already there to become an AGI – it’s just a question of adding the right scaffolding to turn it into an agent. That seems wrong: some of the gaps seem like gaps in raw skills. But even if true, getting the right scaffolding is a big part of the challenge. If it’s not been built yet, then we don’t yet have AGI.

I think a more accurate understanding is that capabilities are very jagged: AI today is superhuman in some ways; but subhuman in others. So should we call this AGI?

What’s the point of definitions anyway?

Definitions help us identify important concepts. You can call Claude Opus 4.6 an AGI if you want, and that helps highlight that it’s far more general than past AI systems.

But it’s also confusing. As we’ve seen, ‘AGI’ is most commonly used to refer to an AI that’s more generally capable than skilled humans at most cognitive tasks, and it’s not there yet.

And there’s a reason for choosing the human level. AI with abilities narrower than humans will remain a tool, which like other technologies, makes humans more productive. However, an AGI that can truly do almost everything a human can do could act as an independent agent, making it more like an expansion in the labour pool than a tool, or even a new species. This could lead to totally different dynamics, such as explosive economic growth or human disempowerment.

An AI that can also do almost everything humans can do could also do AI R&D and scientific research, which could cause an intelligence explosion and 100 years of scientific progress in 10.

Other ‘transformative’ technologies like electricity, computers or the internet caused GDP to keep growing at a steady 2% and a steady rate of scientific progress. True AGI could be unlike any of those. It wouldn’t just keep growth at 2%, it could accelerate the rate of progress, making it more akin to the industrial revolution than a normal technological wave.

Insisting that we already have AGI is rhetorically deflationary. If AGI is such a big deal, why aren’t things crazier? When we have true AGI in the sense of Hassabis, Hinton and OpenAI, things are going to get much wilder than today, and I want to make sure people are warned about that.

Transcending ‘AGI’

Rather than debate how to define a contested term, the ideal would be to stop saying ‘AGI’ and switch to something more precise.

This is what AI Futures, the group behind AI 2027, do. In their most recent timelines model, they define a whole set of important waypoints:

Automated Coder (AC). An AC can fully automate an AGI project’s coding work, replacing the project’s entire software engineering staff.

Superhuman AI Researcher (SAR): A SAR can fully automate AI R&D.

Superintelligent AI Researcher (SIAR). The gap between a SIAR and the top AGI project human researcher is 2x greater than the gap between the top AGI project human researcher and the median researcher.

Top-human-Expert-Dominating AI (TED-AI). A TED-AI is at least as good as top human experts at virtually all cognitive tasks.

Artificial Superintelligence (ASI). The gap between an ASI and the best humans is 2x greater than the gap between the best humans and the median professional, at virtually all cognitive tasks.

Each of these are important points on the route towards recursive self-improvement and transformative systems.1 However, it’s a lot less catchy than ‘AGI’.

(Since this article was published, Ajeya Cotra and Helen Toner also proposed a set of more precise concepts.)

Alternatively, we could try to define a single broad term to replace it. Holden Karnofsky defined ‘transformative AI’ as an AI capable of causing socioeconomic change of a similar scale to the industrial revolution.

This is nice because it picks out what most matters about AI: it might not be a normal technology, but rather one that leads to a fundamentally different socioeconomic regime. It’s also helpful because it allows for the possibility of transformative systems that aren’t very general, such as AIs that are amazing at scientific research, but still can’t do most other jobs.

A downside is that it doesn’t tell us anything about what might be transformative and what won’t. It also hasn’t caught on – almost all search traffic is for “AI” and “AGI”.

Helen Toner also suggested ‘human level AI’, which is nice because it makes it clear the relevant bar is the human-level, and also makes it obvious that it’s vague. But it could also prove confusing: Helen has also argued that AI will remain extremely jagged long into the transformational period, so we could have transformative systems that don’t feel very human-like.

Another option is to try to avoid having any term, and just saying what we mean each time. In Situational Awareness, Leopold Aschenbrenner talks about “a drop in remote worker” i.e. an AI that you can hire to do almost any remote work job, including scientific research. Dario Amodei, the CEO of Anthropic, talks about “a country of geniuses in a datacentre”.

So what should we do?

First, if you hear someone talking about AGI, make sure to check their definition.

Second, according to the most prominent definitions, we don’t yet have AGI. Here’s a recap:

Four of the most prominent definitions of AGI:

DeepMind (Legg et al., 2023): 50th percentile of skilled humans at a wide range of non-physical tasks

Bengio et al., 2025: Matches human cognitive versatility across 10 key capabilities

Hinton: At least as good as humans at nearly all cognitive tasks

OpenAI: Outperforms humans at most economically valuable work

Third, whenever possible, talk about something more precise. The types of AI that seem most important to me in terms of their potential transformative effects are those that can:

Automate coding, because this is an important waypoint to automating AI R&D, might be achieved fairly soon, and would generate a lot of the revenue to fund further research.

Automate AI R&D, because this could accelerate AI progress, and it could also happen before AI that can do most other jobs is created.2

Do most economically important remote work tasks (for the same or lower cost as a skilled human),3 because this could generate huge revenues to fund further AI research, and is an important waypoint.

Automate scientific research, because this could accelerate technological progress.

Automate its own factors of production, including making chips, solar panels and software, because this could create a feedback-loop leading to an industrial explosion.

Do most economically important tasks (including robotic manipulation) more efficiently than humans, because this would result in human economic obsolescence.

None of these have been achieved yet, but that doesn’t mean they won’t be soon. I think there’s about a 25% chance that AI that can automate AI R&D is achieved before 2029, and this could unlock fully general AI soon after. Likewise, mere trend extrapolation of revenues suggests we’ll have AI capable of doing a wide range of jobs by 2030 (more).

In short, all of the following are true, but most people can only focus on one at a time (ht):

AI is still terrible at many things

AI is already great at many things

AI will get much better again

If you’re also skeptical of an algorithmic feedback loop, and think AI progress will be driven by accumulation of revenue and compute, then you’d want a different set of way points, such as Leopold’s “drop in remote worker”.

One way to make this more precise is that progress would slow down more if you stopped using the AI than if you fired all the human researchers involved.

Setting aside tasks where a core part of their value is that a human does them, such as certain types of art.

If you enjoyed this post, you'll probably enjoy these two as well:

https://helentoner.substack.com/p/the-term-agi-is-almost-useless-at

https://www.planned-obsolescence.org/p/six-milestones-for-ai-automation

Big fan of your writing on AI Ben, please keep it up.